ByteRover includes a built-in LLM with limited free usage (requires a logged-in ByteRover account). Connect your own provider to remove limits and choose any supported model — no ByteRover account needed.Documentation Index

Fetch the complete documentation index at: https://docs.byterover.dev/llms.txt

Use this file to discover all available pages before exploring further.

Connect a provider

- TUI

- CLI

Open the provider selector

(Current) for the active provider, [Connected] for previously connected ones.Select and connect

Select an unconnected provider. Providers that support OAuth (currently OpenAI) will present a choice — “Sign in with OAuth” to authenticate via your browser, or “API Key” to enter a key manually. Other providers prompt for an API key directly. ByteRover validates credentials before saving. After connecting, a model selector opens automatically.

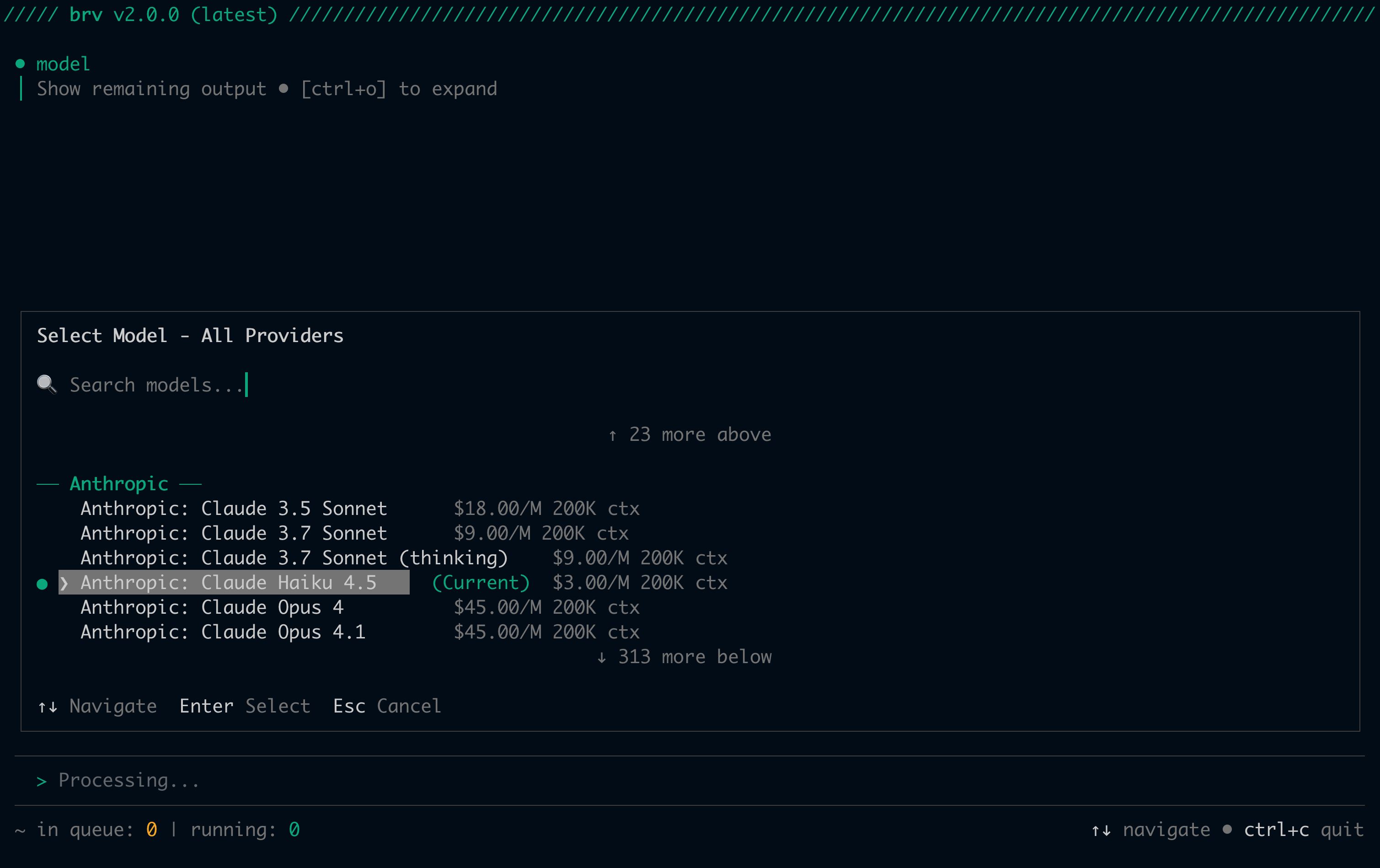

Select a model

- TUI

- CLI

Model lists are cached for 1 hour. ByteRover refreshes automatically when the cache expires.

Switch providers

- TUI

- CLI

Disconnect a provider

Disconnecting removes the stored API key and reverts to ByteRover’s built-in LLM if the disconnected provider was active.- TUI

- CLI

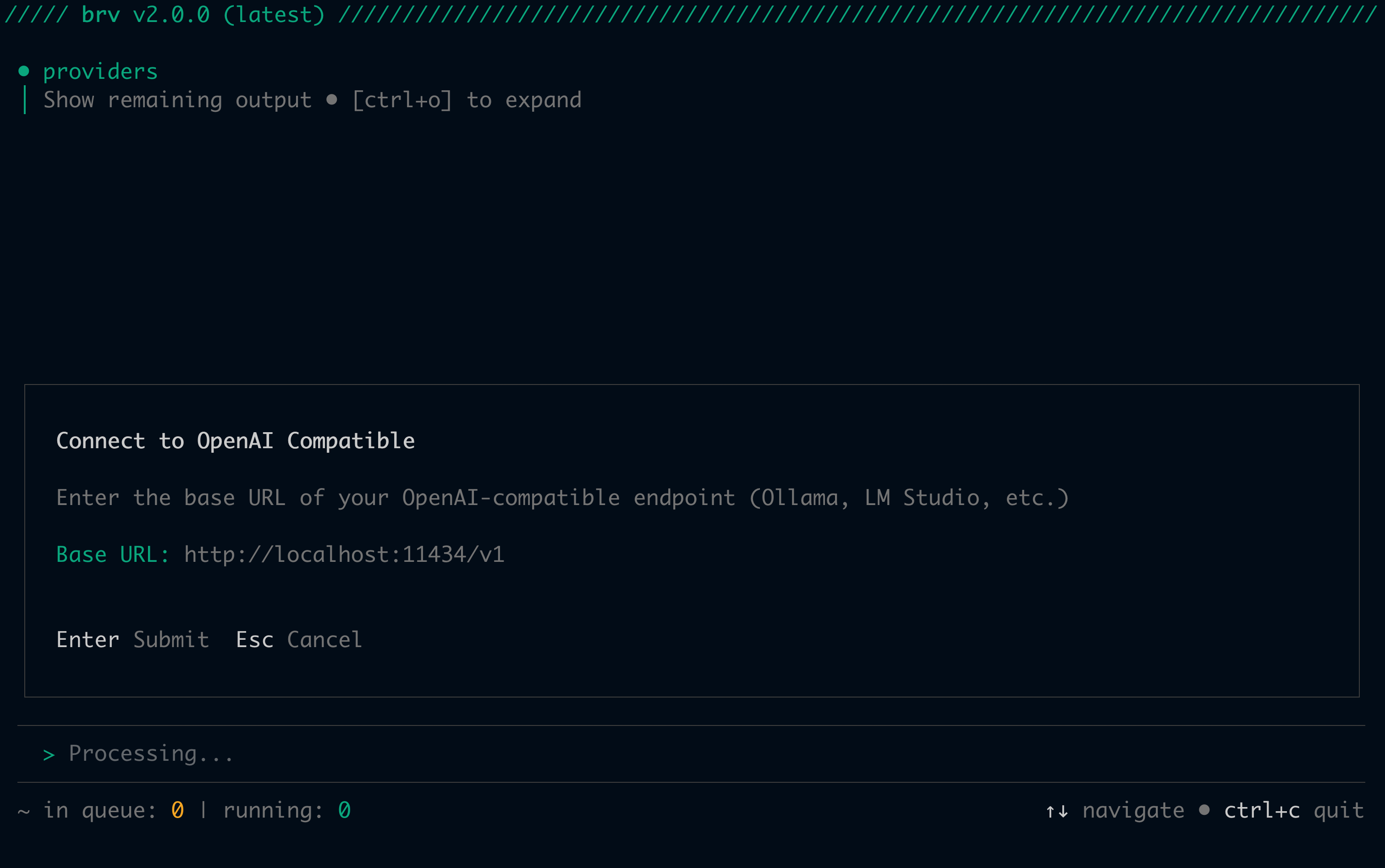

Local LLM setup

Theopenai-compatible provider connects to any OpenAI-compatible local server — Ollama, LM Studio, vLLM, or any custom endpoint. You provide the base URL when connecting.

- TUI

- CLI

Connect the provider

Run

/providers and select OpenAI Compatible. ByteRover prompts you to enter the base URL of your endpoint:

http://localhost:11434/v1 for Ollama). An API key prompt follows — leave it blank if your server doesn’t require one.Supported providers

ByteRover supports 18 providers:| Provider | ID | Default model | Get API key |

|---|---|---|---|

| ByteRover (built-in) | byterover | — | No API key — requires brv login |

| OpenRouter | openrouter | anthropic/claude-sonnet-4.5 | openrouter.ai/keys |

| Anthropic | anthropic | claude-sonnet-4-5-20250929 | console.anthropic.com |

| OpenAI | openai | gpt-4.1 | platform.openai.com |

| Google Gemini | google | gemini-3-flash-preview | aistudio.google.com |

| xAI (Grok) | xai | grok-3 | console.x.ai |

| Groq | groq | openai/gpt-oss-120b | console.groq.com |

| Mistral | mistral | mistral-large-latest | console.mistral.ai |

| DeepInfra | deepinfra | meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8 | deepinfra.com |

| Cohere | cohere | command-a-03-2025 | dashboard.cohere.com |

| Together AI | togetherai | meta-llama/Llama-4-Maverick-17B-128E-Instruct-FP8 | api.together.ai |

| Perplexity | perplexity | sonar-pro | perplexity.ai |

| Cerebras | cerebras | gpt-oss-120b | cloud.cerebras.ai |

| Vercel | vercel | v0-1.5-md | v0.dev |

| MiniMax | minimax | MiniMax-M2.7 | platform.minimax.io |

| GLM (Z.AI) | glm | glm-4.7 | chat.z.ai |

| Moonshot AI (Kimi) | moonshot | kimi-k2.5 | platform.moonshot.ai |

| OpenAI Compatible | openai-compatible | llama3 | Optional (depends on endpoint) |

Recommended LLM list

The following models have been verified and are recommended for optimal performance with ByteRover:| Provider | Model |

|---|---|

| Anthropic | claude-opus-4.6 |

| Anthropic | claude-sonnet-4.6 |

| Anthropic | claude-3.7-sonnet |

| Anthropic | claude-haiku-4.5 |

| Anthropic | claude-3-haiku |

| Google (Gemini) | gemini-3.1-pro-preview |

| Google (Gemini) | gemini-3.1-flash-lite-preview |

| Google (Gemini) | gemini-3-flash-preview |

| OpenAI | gpt-5.4 |

| OpenAI | gpt-5.2 |

| OpenAI | gpt-5.1 |

| OpenAI | gpt-5.4-mini |

| OpenAI | gpt-5 |

| OpenAI | gpt-4.5 |

| OpenAI | gpt-4.1 |

| OpenAI | gpt-4.1-nano |

| OpenAI | gpt-4o |

| OpenAI | gpt-4o-mini |

| OpenAI | o3 |

| OpenAI | o3-mini |

| ZAI | glm-5 |

| ZAI | glm-4.7 |

| ZAI | glm-4.6 |

| ZAI | glm-4.5 |

| ZAI | glm-4.5-flash |

Environment variable auto-detection

If an API key is already set in your environment, ByteRover detects it automatically — no manual entry needed when connecting.| Provider | Environment variable(s) |

|---|---|

| Anthropic | ANTHROPIC_API_KEY |

| OpenAI | OPENAI_API_KEY |

| OpenRouter | OPENROUTER_API_KEY |

| Google Gemini | GOOGLE_API_KEY, GEMINI_API_KEY |

| xAI | XAI_API_KEY |

| Groq | GROQ_API_KEY |

| Mistral | MISTRAL_API_KEY |

| DeepInfra | DEEPINFRA_API_KEY |

| Cohere | COHERE_API_KEY |

| Together AI | TOGETHER_API_KEY, TOGETHERAI_API_KEY |

| Perplexity | PERPLEXITY_API_KEY |

| Cerebras | CEREBRAS_API_KEY |

| Vercel | VERCEL_API_KEY |

| MiniMax | MINIMAX_API_KEY |

| GLM | ZHIPU_API_KEY |

| Moonshot AI | MOONSHOT_API_KEY |

| OpenAI Compatible | OPENAI_COMPATIBLE_API_KEY |

Hot-swap

No restart required when switching providers or models. When you switch, ByteRover broadcasts aprovider:updated event and agent processes pick up the new configuration at the start of their next task. If tasks are already running, the swap is deferred until all in-flight tasks complete.

Two behaviors depending on what changed:

- Provider changed — a new session is created. In-memory conversation history is cleared (history formats are incompatible across providers).

- Model only changed — the session ID is reused, but in-memory conversation history is still lost on the new session manager.

Credential storage

API keys are stored in an AES-256-GCM encrypted local file — not your system keychain. Both files use0600 permissions (owner read/write only).

| File | Purpose |

|---|---|

<data-dir>/.provider-keys | Random 32-byte encryption key, rotated on each save |

<data-dir>/provider-credentials | AES-256-GCM encrypted JSON map of provider → API key |

<config-dir>/providers.json.

Platform-specific paths:

| Platform | <config-dir> | <data-dir> |

|---|---|---|

| macOS | ~/Library/Application Support/brv | ~/Library/Application Support/brv |

| Linux | ~/.config/brv (or $XDG_CONFIG_HOME/brv) | ~/.local/share/brv (or $XDG_DATA_HOME/brv) |

| Windows | %APPDATA%/brv | %LOCALAPPDATA%/brv |

Next steps

Quickstart

Full setup guide including provider configuration

CLI Reference

Complete reference for all

brv providers and brv model commands